RuntimeError: CUDA out of memory. Tried to allocate - Can I solve this problem? - windows - PyTorch Forums

Descrição

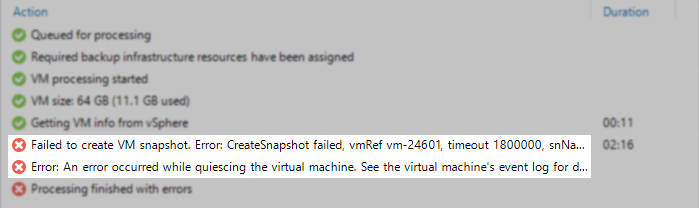

Hello everyone. I am trying to make CUDA work on open AI whisper release. My current setup works just fine with CPU and I use medium.en model I have installed CUDA-enabled Pytorch on Windows 10 computer however when I try speech-to-text decoding with CUDA enabled it fails due to ram error RuntimeError: CUDA out of memory. Tried to allocate 70.00 MiB (GPU 0; 4.00 GiB total capacity; 2.87 GiB already allocated; 0 bytes free; 2.88 GiB reserved in total by PyTorch) If reserved memory is >> allo

anon8231489123/gpt4-x-alpaca-13b-native-4bit-128g · out of memory

CUDA out of memory · Issue #1699 · ultralytics/yolov3 · GitHub

CUDA out of memory after error - PyTorch Forums

Experimental Python tasks (beta) - task description

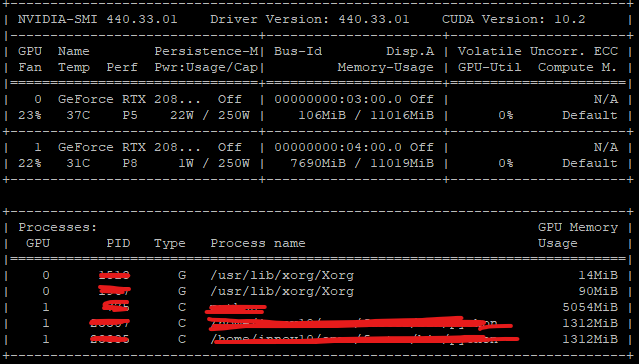

CUDA out of memory, but it shows enough memory available in error

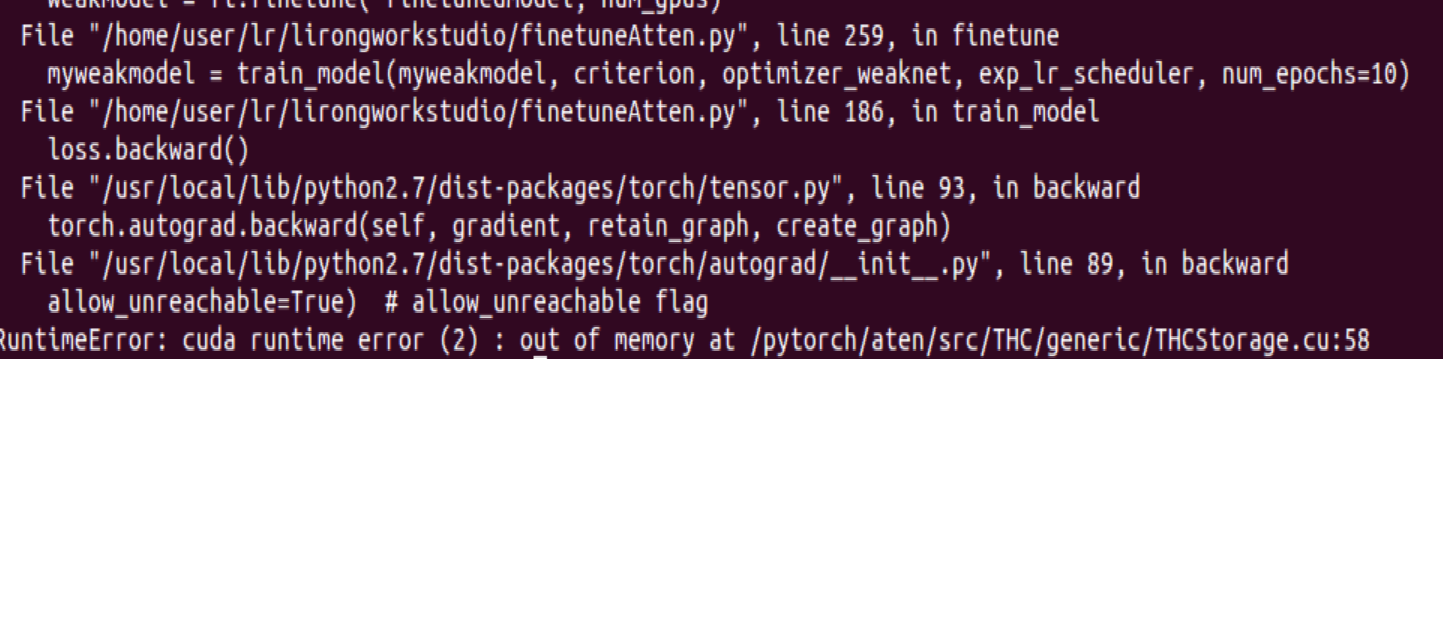

RuntimeError: cuda runtime error (2) : out of memory at /pytorch

RuntimeError: CUDA out of memory. Tried to allocate - Can I solve

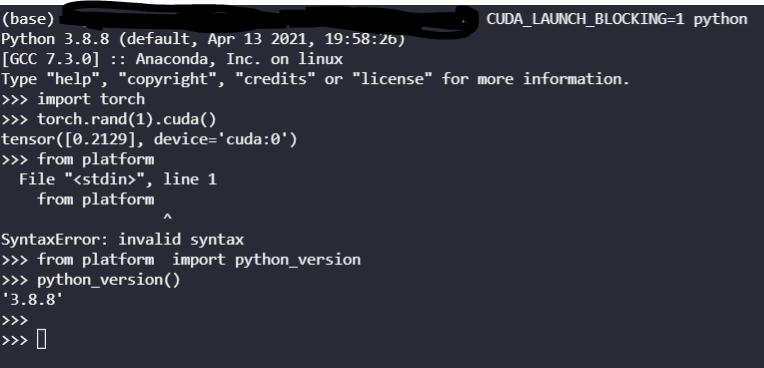

Confusing RuntimeError: CUDA error: device-side assert triggered

Allocated Memory Try Setting Max_split_size_mb To Avoid

RuntimeError: CUDA error: CUBLAS_STATUS_ALLOC_FAILED when calling

de

por adulto (o preço varia de acordo com o tamanho do grupo)