PDF] Steepest Descent and Conjugate Gradient Methods with Variable Preconditioning

Descrição

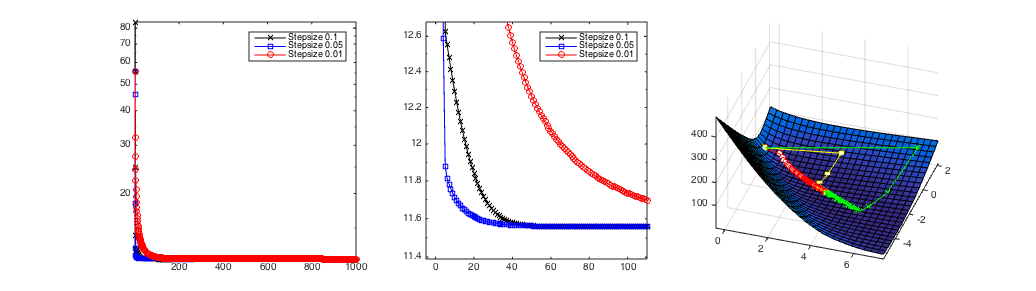

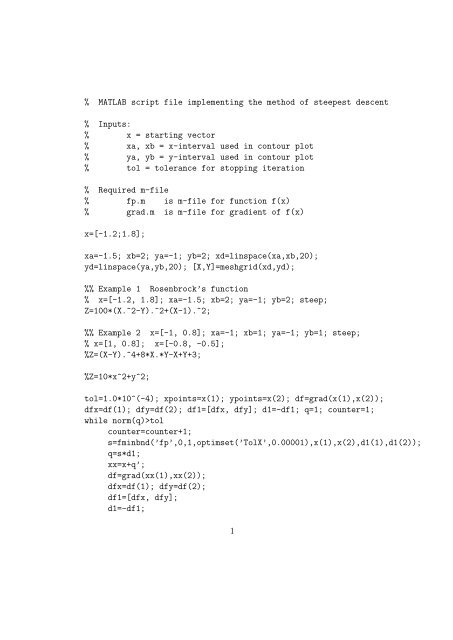

It is shown that the CG method with variable preconditioning under this assumption may not give improvement, compared to the steepest descent (SD) method, and a new elegant geometric proof of the SD convergence rate bound is given. We analyze the conjugate gradient (CG) method with variable preconditioning for solving a linear system with a real symmetric positive definite (SPD) matrix of coefficients $A$. We assume that the preconditioner is SPD on each step, and that the condition number of the preconditioned system matrix is bounded above by a constant independent of the step number. We show that the CG method with variable preconditioning under this assumption may not give improvement, compared to the steepest descent (SD) method. We describe the basic theory of CG methods with variable preconditioning with the emphasis on “worst case” scenarios, and provide complete proofs of all facts not available in the literature. We give a new elegant geometric proof of the SD convergence rate bound. Our numerical experiments, comparing the preconditioned SD and CG methods, not only support and illustrate our theoretical findings, but also reveal two surprising and potentially practically important effects. First, we analyze variable preconditioning in the form of inner-outer iterations. In previous such tests, the unpreconditioned CG inner iterations are applied to an artificial system with some fixed preconditioner as a matrix of coefficients. We test a different scenario, where the unpreconditioned CG inner iterations solve linear systems with the original system matrix $A$. We demonstrate that the CG-SD inner-outer iterations perform as well as the CG-CG inner-outer iterations in these tests. Second, we compare the CG methods using a two-grid preconditioning with fixed and randomly chosen coarse grids, and observe that the fixed preconditioner method is twice as slow as the method with random preconditioning.

![PDF] Steepest Descent and Conjugate Gradient Methods with Variable Preconditioning](https://ars.els-cdn.com/content/image/1-s2.0-S0024379516304104-gr002.gif)

Preconditioned steepest descent-like methods for symmetric

![PDF] Steepest Descent and Conjugate Gradient Methods with Variable Preconditioning](https://0.academia-photos.com/attachment_thumbnails/72977277/mini_magick20211017-24271-1gx7s03.png?1634525295)

PDF) Conditional Gradient (Frank-Wolfe) Method

![PDF] Steepest Descent and Conjugate Gradient Methods with Variable Preconditioning](https://i1.rgstatic.net/publication/259976327_The_Steepest_Descent_Method_for_Linear_Minimax_Problems/links/02e7e52ed337b7c1ad000000/largepreview.png)

PDF) The Steepest Descent Method for Linear Minimax Problems

![PDF] Steepest Descent and Conjugate Gradient Methods with Variable Preconditioning](https://d3i71xaburhd42.cloudfront.net/6252990076647c9b14e3b1d4ff3ccdafb591a6ff/4-Figure4-1.png)

PDF] Nonsymmetric multigrid preconditioning for conjugate gradient

![PDF] Steepest Descent and Conjugate Gradient Methods with Variable Preconditioning](https://wikimedia.org/api/rest_v1/media/math/render/svg/021e02360a28c46188bc915eb06533dfa84a3002)

Conjugate gradient method - Wikipedia

![PDF] Steepest Descent and Conjugate Gradient Methods with Variable Preconditioning](https://media.springernature.com/lw685/springer-static/image/chp%3A10.1007%2F978-3-031-08720-2_3/MediaObjects/527858_1_En_3_Fig2_HTML.png)

Steepest Descent Methods

![PDF] Steepest Descent and Conjugate Gradient Methods with Variable Preconditioning](https://scipy-lectures.org/_images/sphx_glr_plot_gradient_descent_017.png)

2.7. Mathematical optimization: finding minima of functions

![PDF] Steepest Descent and Conjugate Gradient Methods with Variable Preconditioning](https://upload.wikimedia.org/wikipedia/commons/thumb/b/bf/Conjugate_gradient_illustration.svg/220px-Conjugate_gradient_illustration.svg.png)

Conjugate gradient method - Wikipedia

![PDF] Steepest Descent and Conjugate Gradient Methods with Variable Preconditioning](https://static.hindawi.com/articles/mpe/volume-2021/6219062/figures/6219062.fig.006.jpg)

A Descent Four-Term Conjugate Gradient Method with Global

Hyperbolic Pairs in the Method of Conjugate Gradients

![PDF] Steepest Descent and Conjugate Gradient Methods with Variable Preconditioning](https://media.springernature.com/lw685/springer-static/image/prt%3A978-0-306-48332-5%2F3/MediaObjects/978-0-306-48332-5_3_Part_Fig6_HTML.gif)

Conjugate-Gradient Methods

![PDF] Steepest Descent and Conjugate Gradient Methods with Variable Preconditioning](https://i1.rgstatic.net/publication/2128371_Steepest_Descent_and_Conjugate_Gradient_Methods_with_Variable_Preconditioning/links/54124a4d0cf2fa878ad39ab6/largepreview.png)

PDF) Steepest Descent and Conjugate Gradient Methods with Variable

![PDF] Steepest Descent and Conjugate Gradient Methods with Variable Preconditioning](https://www.researchgate.net/publication/275377140/figure/fig1/AS:391780529917952@1470419165025/Search-paths-of-the-steepest-descent-and-the-conjugate-gradient-methods-on-a-2D-plane.png)

Search paths of the steepest descent and the conjugate gradient

![PDF] Steepest Descent and Conjugate Gradient Methods with Variable Preconditioning](https://onlinelibrary.wiley.com/cms/asset/47a49746-686b-4b96-ada3-9f0535d25edc/gamm.v43.4.cover.jpg?trick=1700487419750)

Preconditioners for Krylov subspace methods: An overview - Pearson

de

por adulto (o preço varia de acordo com o tamanho do grupo)